In this tutorial, we demonstrate how to build an intelligent AI assistant by integrating LangChain, Gemini 2.0 Flash, and Jina Search tools. By combining the capabilities of a powerful large language model (LLM) with an external search API, we create an assistant that can provide up-to-date information with citations. This step-by-step tutorial walks through setting up API keys, installing necessary libraries, binding tools to the Gemini model, and building a custom LangChain that dynamically calls external tools when the model requires fresh or specific information. By the end of this tutorial, we will have a fully functional, interactive AI assistant that can respond to user queries with accurate, current, and well-sourced answers.

We install the required Python packages for this project. It includes the LangChain framework for building AI applications, LangChain Community tools (version 0.2.16 or higher), and LangChain’s integration with Google Gemini models. These packages enable seamless use of Gemini models and external tools within LangChain pipelines.

import os

import json

from typing import Dict, Any

We incorporate essential modules into the project. Getpass allows securely entering API keys without displaying them on the screen, while os helps manage environment variables and file paths. JSON is used for handling JSON data structures, and typing provides type hints for variables, such as dictionaries and function arguments, ensuring better code readability and maintainability.

We ensure that the necessary API keys for Jina and Google Gemini are set as environment variables. Suppose the keys are not already defined in the environment. In that case, the script prompts the user to enter them securely using the getpass module, keeping the keys hidden from view for security purposes. This approach enables seamless access to these services without requiring the hardcoding of sensitive information in the code.

from langchain_google_genai import ChatGoogleGenerativeAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.runnables import RunnableConfig, chain

from langchain_core.messages import HumanMessage, AIMessage, ToolMessage

print(“🔧 Setting up tools and model…”)

We import key modules and classes from the LangChain ecosystem. It introduces the JinaSearch tool for web search, the ChatGoogleGenerativeAI model for accessing Google’s Gemini, and essential classes from LangChain Core, including ChatPromptTemplate, RunnableConfig, and message structures (HumanMessage, AIMessage, and ToolMessage). Together, these components enable the integration of external tools with Gemini for dynamic, AI-driven information retrieval. The print statement confirms that the setup process has begun.

print(f”✅ Jina Search tool initialized: {search_tool.name}”)

print(“\n🔍 Testing Jina Search directly:”)

direct_search_result = search_tool.invoke({“query”: “what is langgraph”})

print(f”Direct search result preview: {direct_search_result[:200]}…”)

We initialize the Jina Search tool by creating an instance of JinaSearch() and confirming it’s ready for use. The tool is designed to handle web search queries within the LangChain ecosystem. The script then runs a direct test query, “what is langgraph”, using the invoke method, and prints a preview of the search result. This step verifies that the search tool is functioning correctly before integrating it into a larger AI assistant workflow.

model=”gemini-2.0-flash”,

temperature=0.1,

convert_system_message_to_human=True

)

print(“✅ Gemini model initialized”)

We initialize the Gemini 2.0 Flash model using the ChatGoogleGenerativeAI class from LangChain. The model is set with a low temperature (0.1) for more deterministic responses, and the convert_system_message_to_human=True parameter ensures system-level prompts are properly handled as human-readable messages for Gemini’s API. The final print statement confirms that the Gemini model is ready for use.

(“system”, “””You are an intelligent assistant with access to web search capabilities.

When users ask questions, you can use the Jina search tool to find current information.

Instructions:

1. If the question requires recent or specific information, use the search tool

2. Provide comprehensive answers based on the search results

3. Always cite your sources when using search results

4. Be helpful and informative in your responses”””),

(“human”, “{user_input}”),

(“placeholder”, “{messages}”),

])

We define a prompt template using ChatPromptTemplate.from_messages() that guides the AI’s behavior. It includes a system message outlining the assistant’s role, a human message placeholder for user queries, and a placeholder for tool messages generated during tool calls. This structured prompt ensures the AI provides helpful, informative, and well-sourced responses while seamlessly integrating search results into the conversation.

print(“✅ Tools bound to Gemini model”)

main_chain = detailed_prompt | gemini_with_tools

def format_tool_result(tool_call: Dict[str, Any], tool_result: str) -> str:

“””Format tool results for better readability”””

return f”Search Results for ‘{tool_call[‘args’][‘query’]}’:\n{tool_result[:800]}…”

We bind the Jina Search tool to the Gemini model using bind_tools(), enabling the model to invoke the search tool when needed. The main_chain combines the structured prompt template and the tool-enhanced Gemini model, creating a seamless workflow for handling user inputs and dynamic tool calls. Additionally, the format_tool_result function formats search results for a clear and readable display, ensuring users can easily understand the outputs of search queries.

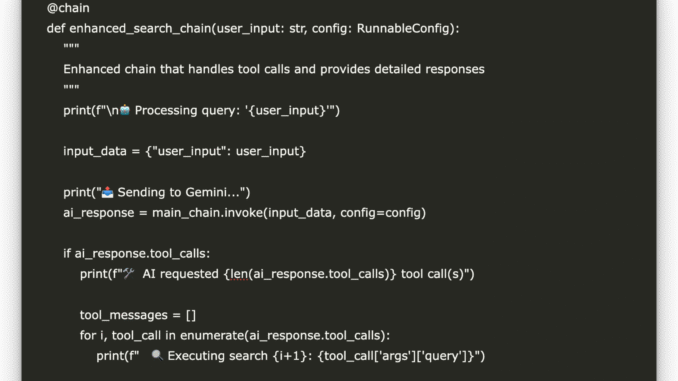

def enhanced_search_chain(user_input: str, config: RunnableConfig):

“””

Enhanced chain that handles tool calls and provides detailed responses

“””

print(f”\n🤖 Processing query: ‘{user_input}'”)

input_data = {“user_input”: user_input}

print(“📤 Sending to Gemini…”)

ai_response = main_chain.invoke(input_data, config=config)

if ai_response.tool_calls:

print(f”🛠️ AI requested {len(ai_response.tool_calls)} tool call(s)”)

tool_messages = []

for i, tool_call in enumerate(ai_response.tool_calls):

print(f” 🔍 Executing search {i+1}: {tool_call[‘args’][‘query’]}”)

tool_result = search_tool.invoke(tool_call)

tool_msg = ToolMessage(

content=tool_result,

tool_call_id=tool_call[‘id’]

)

tool_messages.append(tool_msg)

print(“📥 Getting final response with search results…”)

final_input = {

**input_data,

“messages”: [ai_response] + tool_messages

}

final_response = main_chain.invoke(final_input, config=config)

return final_response

else:

print(“ℹ️ No tool calls needed”)

return ai_response

We define the enhanced_search_chain using the @chain decorator from LangChain, enabling it to handle user queries with dynamic tool usage. It takes a user input and a configuration object, passes the input through the main chain (which includes the prompt and Gemini with tools), and checks if the AI suggests any tool calls (e.g., web search via Jina). If tool calls are present, it executes the searches, creates ToolMessage objects, and reinvokes the chain with the tool results for a final, context-enriched response. If no tool calls are made, it returns the AI’s response directly.

“””Test the search chain with various queries”””

test_queries = [

“what is langgraph”,

“latest developments in AI for 2024”,

“how does langchain work with different LLMs”

]

print(“\n” + “=”*60)

print(“🧪 TESTING ENHANCED SEARCH CHAIN”)

print(“=”*60)

for i, query in enumerate(test_queries, 1):

print(f”\n📝 Test {i}: {query}”)

print(“-” * 50)

try:

response = enhanced_search_chain.invoke(query)

print(f”✅ Response: {response.content[:300]}…”)

if hasattr(response, ‘tool_calls’) and response.tool_calls:

print(f”🛠️ Used {len(response.tool_calls)} tool call(s)”)

except Exception as e:

print(f”❌ Error: {str(e)}”)

print(“-” * 50)

The function, test_search_chain(), validates the entire AI assistant setup by running a series of test queries through the enhanced_search_chain. It defines a list of diverse test prompts, covering tools, AI topics, and LangChain integrations, and prints results, indicating whether tool calls were used. This helps verify that the AI can effectively trigger web searches, process responses, and return useful information to users, ensuring a robust and interactive system.

print(“\n🚀 Starting enhanced LangChain + Gemini + Jina Search demo…”)

test_search_chain()

print(“\n” + “=”*60)

print(“💬 INTERACTIVE MODE – Ask me anything! (type ‘quit’ to exit)”)

print(“=”*60)

while True:

user_query = input(“\n🗣️ Your question: “).strip()

if user_query.lower() in [‘quit’, ‘exit’, ‘bye’]:

print(“👋 Goodbye!”)

break

if user_query:

try:

response = enhanced_search_chain.invoke(user_query)

print(f”\n🤖 Response:\n{response.content}”)

except Exception as e:

print(f”❌ Error: {str(e)}”)

Finally, we run the AI assistant as a script when the file is executed directly. It first calls the test_search_chain() function to validate the system with predefined queries, ensuring the setup works correctly. Then, it starts an interactive mode, allowing users to type custom questions and receive AI-generated responses enriched with dynamic search results when needed. The loop continues until the user types ‘quit’, ‘exit’, or ‘bye’, providing an intuitive and hands-on way to interact with the AI system.

In conclusion, we’ve successfully built an enhanced AI assistant that leverages LangChain’s modular framework, Gemini 2.0 Flash’s generative capabilities, and Jina Search’s real-time web search functionality. This hybrid approach demonstrates how AI models can expand their knowledge beyond static data, providing users with timely and relevant information from reliable sources. You can now extend this project further by integrating additional tools, customizing prompts, or deploying the assistant as an API or web app for broader applications. This foundation opens up endless possibilities for building intelligent systems that are both powerful and contextually aware.

Check out the Notebook on GitHub. All credit for this research goes to the researchers of this project. Also, feel free to follow us on Twitter and don’t forget to join our 95k+ ML SubReddit and Subscribe to our Newsletter.

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is committed to harnessing the potential of Artificial Intelligence for social good. His most recent endeavor is the launch of an Artificial Intelligence Media Platform, Marktechpost, which stands out for its in-depth coverage of machine learning and deep learning news that is both technically sound and easily understandable by a wide audience. The platform boasts of over 2 million monthly views, illustrating its popularity among audiences.

Be the first to comment